Why Web Search Is Now the Dominant Cost in Your Stack

Published / Last Updated May 4, 2026

Category Blog

Ceramic Team · May 2026 · 4 min read

Every frontier AI model starts your query with a web search. Grok does it. Claude does it. Gemini does it. It’s not an accident — it's an architectural necessity.

The problem of training cut-off

Large language models are trained on data with a cut-off date typically 6–9 months before the model's public release. This leaves a black hole in their data set. By the time a model reaches your users, it already knows nothing about the past several months of the world. So instead, search patches this gap at inference time. When a user submits a query, the model retrieves web results and folds that current information directly into its context window — effectively grounding its response in the present rather than the frozen past of its training data.

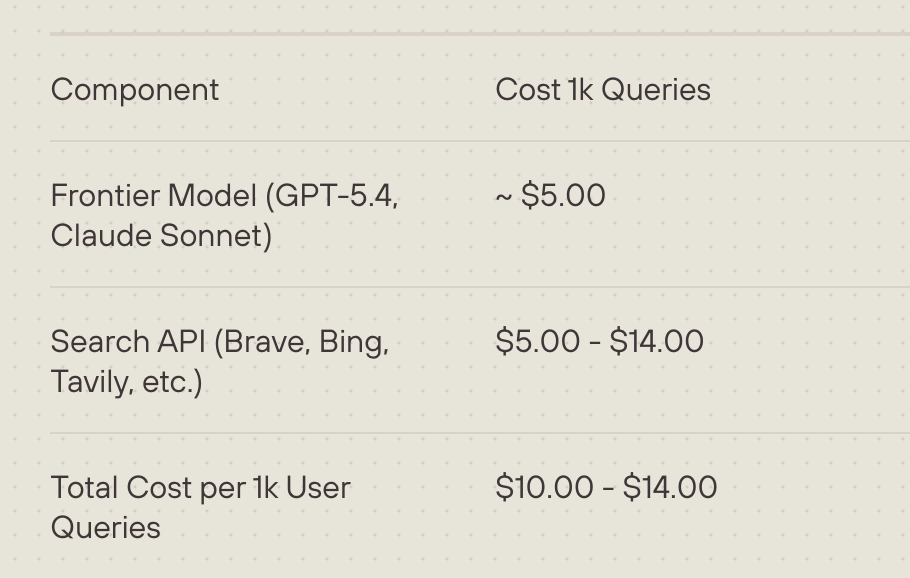

But here's what the pricing looks like today:

Why this matters for product decisions:

When models issue 1–5 searches per user query — which they do — search quickly becomes the largest cost in your stack. Not compute. Search.

Knee-jerk reactions include:

➡️ You limit search to premium users only

➡️ You cap the number of searches per session

➡️ You skip search for lower-stakes queries where it would still help

➡️ You can't afford to add search to smaller, cheaper open-source models

Cheaper search, no compromise: Ceramic.ai

When search costs drop to $0.05 per 1,000 queries — a fraction of inference pricing — the calculus flips. You can search every query. You can verify every model output before it reaches users. You can build agentic workflows that issue dozens of searches per task without the economics breaking down.